blogA Patent Practitioner's Guide to MCP

Why your LLM gets patent work wrong — and how to fix it.

If you work with patents and you aren't using an LLM like Claude or ChatGPT, you are making a mistake. Dollar for dollar, these tools are the single best investment available for patent work today. They can reason about claim language, analyze prior art, draft arguments, and synthesize technical information faster than any tool that came before them.

But if you've actually tried using them for serious patent work, you've probably run into some version of the same problems:

Hallucinated patent numbers. You ask Claude to find prior art and it gives you a nice list of patents except when you go to pull one up, the number doesn't exist.

A mix of real and fabricated references. The LLM searches the web, finds some legitimate patents and publications, and weaves them together with references it generated from patterns in its training data. The result is a patchwork of real and made-up information all presented with equal confidence. You have to check every single one, and you don't know which ones to trust until you do. This is actually worse than pure hallucination — at least if everything were fake, you'd know to start over.

Confident wrong answers. The analysis looks right. The reasoning is sound. But the conclusion is wrong because the LLM was working from a snippet or a partial HTML page — not the full text. Maybe it missed key claims, got the priority date wrong, or mischaracterized the scope of the invention because it never actually read the complete document. Or maybe it missed an easy-to-find key piece of prior art because LLMs just aren't very good at searching.

After enough of these experiences, you start to wonder: is the problem fundamental? Can LLMs just not do patent work reliably? Or is the problem me — am I prompting it wrong? Am I expecting too much? Should I be using it differently?

This uncertainty is the real cost. It's not just the wasted time on bad results. It's the erosion of trust that makes you second-guess every response, even the good ones. It's the decision to stop using AI for substantive work and limit it to things like summarization where the stakes are low.

Here's the thing: the problem isn't fundamental, and it probably isn't you. The problem is that your LLM doesn't have reliable access to patent data.

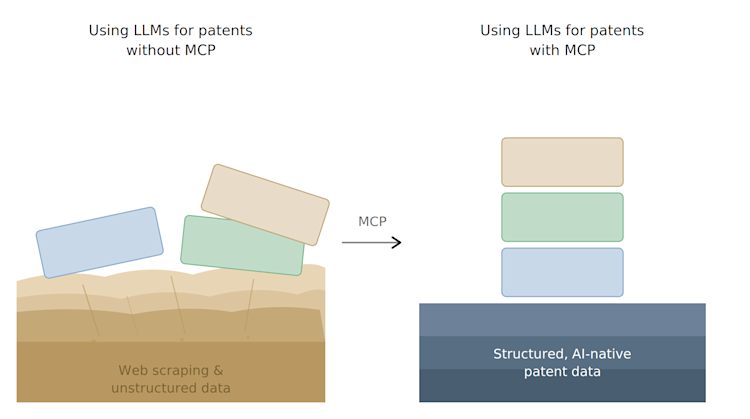

What's actually going wrongLLMs like Claude can search the web, and they'll often find some real patent information when they do — a Google Patents page here, a USPTO listing there. But web search for patents is fundamentally unreliable. The LLM is scraping HTML pages that may be incomplete, parsing results that may not include full claims or descriptions, and filling gaps with pattern-matched text from its training data. It has no way to distinguish between what it found and what it generated. Neither do you.

The result is that patchwork problem: real patents mixed with fabricated ones, accurate claims next to hallucinated ones, genuine analysis built on a foundation that might be half sand. You can't tell which half without manually verifying everything — and at that point, what exactly is the AI saving you?

The core issue is that an LLM doing web searches for patents is not the same as an LLM connected to a structured patent database designed for AI. Web search is a general-purpose tool that sometimes returns patent information. What patent work requires is structured, reliable, complete access to patent data — the kind of access where the AI can search across millions of documents, retrieve full text, pull the right bibliographic data, check legal status history, and know with certainty that what it's working with is real.

This is where MCP comes inMCP stands for Model Context Protocol. It's an open standard that lets AI tools connect directly to external databases and services — including patent databases.

When Claude is connected to a patent database via MCP, the dynamic changes completely. Instead of scraping web pages and hoping for complete information, it queries a structured database directly and gets structured patent information back in return.

The mix-of-real-and-fake problem goes away — not because the LLM got smarter, but because it's now working with a reliable data source instead of cobbling together fragments from the open web.

Ever heard the phrase garbage-in, garbage-out? Yep. Same story. Every tool on the market is working with the same level of LLM intelligence. The difference is what information you give it access to.

Not all patent MCPs are equalHaving an MCP is better than not having one. But not every MCP connection to patent data will give you the same results. There are two things you should be evaluating:

Is the patent data AI-native? There's a meaningful difference between patent data that has been structured for AI to work with and existing patent data that has simply been wrapped up in an MCP interface. When data is AI-native, it's organized and formatted so that the LLM can parse it cleanly — structured fields, consistent formatting, complete documents with all the metadata the AI needs to reason effectively. When it's just a wrapper around legacy data, the LLM can technically access it, but it's still better than nothing. You'll notice the difference when the AI starts getting confused, chasing its tail, or returning odd results because the data didn't match its expectations.

Does the MCP have strong patent search? This is critical, often overlooked, and frankly much harder. An MCP gives the LLM access to patent data, but context windows are limited — you can't just dump an entire patent database into a conversation. The MCP needs to know how to find the right information and return what's relevant. This is where search quality matters enormously and the information retrieval technology that works for the rest of the world tends to fall apart with patents.

If the MCP is only using keyword or classification code searching, results will be very hit or miss. Even when keyword search works, it often returns hundreds of results — better than web search, but still too many for an LLM to process effectively. Semantic search can be better at ranking the most relevant results higher. But you need to be careful here. The semantic search technology used by most patent search providers is only about 50% reliable. That may sound like a bold claim, but anyone who has done serious benchmarking against real-world patent examination results knows the gap. The quality of the embeddings, the coverage of the corpus, and whether the search operates on full text or just abstracts and claims all make a massive difference in whether the MCP surfaces relevant prior art.

When evaluating a patent MCP, ask: What search technology is behind it? How was it built? What corpus does it cover? Has it been benchmarked against real patent examination outcomes? Has it been validated in automated workflows with an LLM? The answers will tell you whether you're getting a reliable research tool or a slightly better version of web search.

Why does this matter right now?MCP is supported today. Claude, ChatGPT, and most major AI platforms support MCP connections right now. This isn't a future technology. It's available infrastructure that is already by some patent professionals while most don't even know it exists.

Enterprise AI spend is growing. Most large IP departments and law firms now have enterprise AI subscriptions. But without MCP connections to relevant data sources, these subscriptions are dramatically underutilized -- especially in patent work. You're paying for an engine with no fuel.

Your competitors are figuring this out. The firms and in-house teams that connect their AI tools to real patent data will have a structural advantage in speed, thoroughness, and cost.

What should you do?If you've been disappointed by LLMs for patent work, don't give up on them. Their ability to reason is already powerful and will only get better. What has been missing is the data connection. MCP is the fix.

If you have an enterprise AI subscription, find out whether your organization is connecting it to any patent data sources via MCP. If not, you're only using a fraction of what you're paying for.

If you're evaluating patent tools, ask whether they offer MCP access and then ask the harder questions too. Is the data structured for AI? What search technology is behind it? Has it been benchmarked? A tool that works inside your AI workflow is fundamentally more useful than a standalone platform, but only if the data and search quality are there to back it up.

If you manage an IP team, make sure your team has access to MCP-connected AI and uses it. The difference is huge and will help you make smarter choices and which IP-specific tools you actually need to buy.

Experiment with different prompts and skills. MCP solves the data problem, but getting the most out of AI still requires learning how to ask good questions, structure your requests, and guide the reasoning process. Some MCPs come with built-in skills that reflect well-curated and battle-tested prompts already. You can also find great open resources online with patent-specific prompts and communities that are sharing best practices. The combination of reliable data access and effective prompting is where the real leverage is (that and context management, but that's for another post).

The bottom lineLLMs are not the problem or the solution. The problem is process and data. Tools that work with unreliable information give unreliable results. For patent work, access to an AI-native structured patent database is critical to making LLMs work well.

MCPs make this very easy to do. You do not need to learn a new interface. You're not bound by the constraints of whatever software you've purchased. MCP is an open protocol, a standard way to connect AI tools to data sources. It plugs directly into the LLM you are already using and grounds it in reliable, verifiable, factual data. For patent work this is the single most important thing standing between where patent AI is today and where it's going.

The patent professionals and leaders who understand this now — who learn to work with AI that's connected to real data, and who build workflows that leverage that connection — will define how this technology gets used across the profession. Not only will you have a solid understanding of what LLMs are fundamentally capable of, you'll have a better understanding of which tools you need to buy, which you should build, and what needs to change about your workflow.

Join the MCP Waitlist:We've been running a patent MCP with a small group of IP teams, connecting Claude and ChatGPT to 185 million patents with AI-native search. Due to high demand we're rolling this out slowly and prioritizing feedback with each cohort.

If you're interested in getting access, we invite you to join the waitlist.