blogBenchmarking AI search components and LLMs

To craft more reliable and intelligent AI systems, we need a better understanding of individual search components. In our recent post about what's missing in AI patent search, we noted that many published patent search benchmarks compare individual retrieval mechanisms like an embedding-based search against a complete system like ChatGPT or Perplexity Patents. This is misleading because systems like those are only as good as the retrieval technology they have access to. Measuring the building blocks is the real prerequisite for building AI agents that actually work.

This post is that measurement. We took 906 US patents challenged in PTAB IPR proceedings and benchmarked a range of retrieval approaches against them. We also compare against Claude to better understand the strengths and weaknesses of LLMs.

Understanding the gaps in retrieval technology is useful. We need to both understand where they work and where they fail. That allows us to combine existing tools to build better systems in addition to building better foundational tools. In Amplified’s case, we believe the gap is a lack of high quality embeddings that use the full text of patents rather than just claims.

The benchmarkWe chose PTAB IPR cases because they come with two sources of ground truth:

Examiner citations — references found during prosecution by patent examiners. The closest proxy for what a competent professional searcher finds. We excluded applicant-cited references (IDS filings) since these are often noisy.

PTAB invalidity references — the strongest, most adversarially vetted prior art in the patent system. Found by motivated litigators and expert searchers with real money on the line.

For each of the 906 challenged patents we searched a total pool of ~185M global patents. We limited results from any given approach to the first 10,000 publications with filing dates before the challenged patent’s priority. Those publications were then grouped by simple patent family. We measured the rank of each known prior art for each approach so that we could understand which approaches capture which art and at what rank.

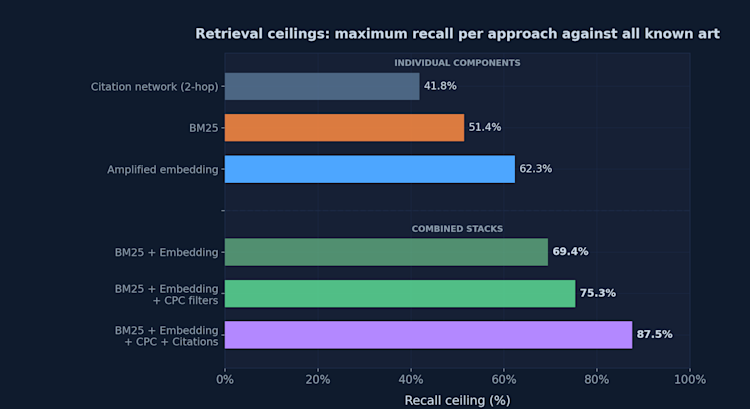

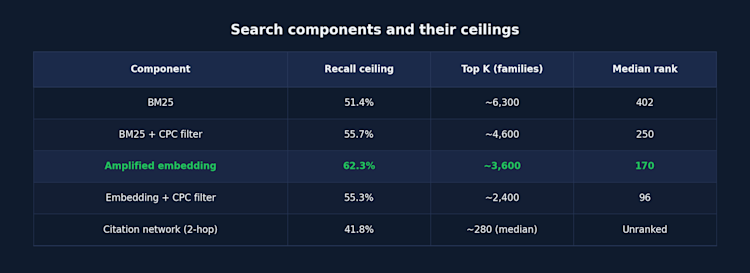

Search components and their ceilingsEvery retrieval component has a recall ceiling — a theoretical limit on how much relevant prior art it can find. We also consider the candidate pool size and ranking. This tells both sides of the story: how much total art you could theoretically find with a given approach and how efficiently you would find it.

We used BM25 as a baseline because it is widely recognized as the de-facto standard for keyword-based NLP retrieval. We also tested a vector k-nearest neighbor search using Amplified’s full-text embeddings. Each was run against the full 185M+ patent index without any filters applied. We also tested CPC code limited search and citation network traversal. Finally, we compare to an LLM-generated keyword query approach.

A few things are immediately apparent. Amplified’s embedding finds more art than BM25 (62.3% vs 51.4%), from a smaller candidate pool (~3,600 vs ~6,300 families), with better ranking (median rank 170 vs 402). It does more with less.

The critical insight here is how little overlap there is between BM25 and embeddings. They find fundamentally different art. Of the 906 ground truth pairs, BM25 and embedding overlap on only 401. Amplified’s embedding finds 163 references that BM25 misses entirely — 121 of them §103 obviousness art, some of the hardest kind to surface. And BM25 finds 65 that our embedding misses. Neither is complete on its own. They are genuinely complementary.

On citations: We excluded direct backward citations of the challenged patent since those are trivial to find. So the 41.8% number reflects non-trivial citation recall — traversing the citation network through multiple forward and backward hops. However, since PTAB IPR cases involve patents with rich, well-developed citation histories, the data is naturally biased toward that approach working well. In addition, citation networks are less useful at the time of filing since they aren’t developed yet. So, while citation networks are widely accepted as powerful, they are also limited and the results shown here likely overstate their efficacy.

On classification codes: CPC search has a theoretical limit of 67% recall. That is based on the number of results that share at least one CPC group with the challenged patent. In other words, one-third of the most important prior art lives outside the patent’s own classification. Since searching within multiple CPC codes quickly adds up to far more than 10,000 results, we looked at the top 10,000 publications ranked by Amplified’s embeddings and BM25. We found that limiting the search universe to overlapping CPC codes made BM25 much better: finding 133 references that the unfiltered top 10k from BM25 would miss. It makes sense that narrowing the universe by technology grouping compensates for the inherent limitations of BM25’s keyword based approach. On the other hand, CPC codes hardly help embeddings at all. Embeddings already do a good job of understanding technology conceptually so adding the filter actually hurts overall retrieval by limiting the pool. This is exactly what you’d expect from a strong semantic model that understands patents conceptually and reinforces why a keyword-heavy approach like BM25 and Amplified’s full-text embeddings work well together.

Combining componentsHere’s what happens when you stack complementary approaches together:

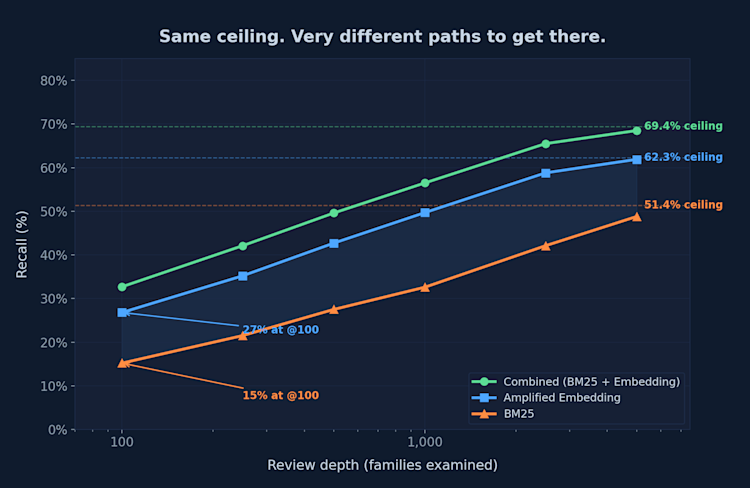

BM25 and embedding reach nearly identical ceilings (~66%) if you go far enough out. The big difference is that embeddings get there much faster. Roughly half the candidate pool. Combining them jumps the ceiling up to 75.3%. This is because they’re surfacing different references rather than duplicating each other’s work. Add citation network to the mix and the ceiling reaches 87.5%, though as noted above, citation recall in this dataset may benefit from PTAB cases having richer than average citation histories.

Ranking is needed to make combination practicalCombining components raises the ceiling but also multiplies the candidate pool. Looking at recall ceiling alone exaggerates the practical implications and misses the reason embeddings actually matter most: ranking quality.

Let’s look at BM25 and our full-text embeddings at a more practical review depth of @100. Here, embeddings find 32% of all prior art while BM25 is at 22%. BM25 needs roughly 5x the depth to reach the same recall rates. The ceiling is the same; the practical experience is entirely different.

When both approaches find the same reference, Amplified’s embedding ranks it higher 65.3% of the time — by a median of 642 positions. On harder to find art like Y-categories that gap widens to 1,383 positions.

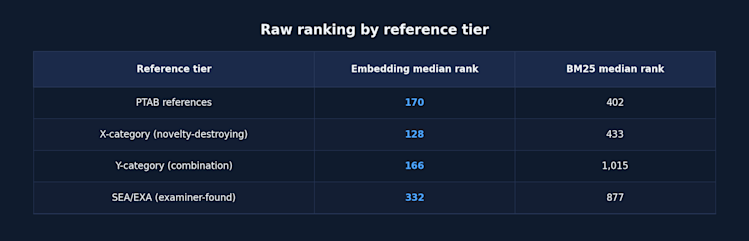

The pattern holds across different tiers of references:

Y-category references — combination art — are particularly telling. Embeddings find them at median rank 166 compared to BM25’s 1,015. A 6x difference. These are combination references so it’s logical that BM25 would rank it lower. Only some of the terms overlap. A strong embedding doesn’t just find more art — it learns concepts and reliably pushes relevant art to the top even when the relationship is indirect. Applying that to a 13,000-candidate pool transforms it from an unreasonable task into a workable list.

The ranking used in this analysis is actually understated. We’re using raw KNN rankings — simple cosine similarity against the full index without any reranking or post-processing. Running a true reranker improves results further. More on that in a future post.

Where LLMs fit — and where they don’tThere’s a natural question that arises: what role do LLMs play in all of this? The answer matters because it’s where most of the industry’s attention is focused right now.

LLMs are not search components. They are analysis and planning tools. When you use an LLM to orchestrate a search system — deciding which components to run, how to combine results, how to reason about what it finds — they have potential to be transformative. But when you use an LLM as a search component — generating queries, directing retrieval — the results are deceptive.

We tested this directly. We had Claude generate optimized keyword queries for each of our 906 PTAB cases similar to how general purpose LLMs approach a patent search. We gave ours a small advantage: results were ranked using Amplified’s patent embeddings and we allowed the LLM to read through far more patents than you’d typically get when working in their standard harness. Despite that advantage, the recall ceiling was just 34.4% despite covering ~9,500 candidate families. In other words, worse than BM25 which reaches 51.4% from a comparable pool. The LLM can only find what it can articulate in keywords, and structurally that’s already a hard bottleneck.

LLMs are good at reasoning about patents. They are not good at finding them. We tested other LLM-driven strategies as well but they produced worse results. LLMs are good at understanding and presenting results, so they’ll leave you with a good impression but the ceiling is much lower than the user experience suggests. There’s no question that it feels great to get one or two great results right away and (as long as they don’t hallucinate) an LLM can often get you one or two useful results without any additional noise.

That’s actually more of an opportunity than a critique. The right role for LLMs is at the system level: orchestrating strong search components, reasoning about results, and iterating intelligently. This is a topic we’re eager to share more on in the future and we’ve already hinted at it in our recent post about MCPs.

Not all embeddings are the sameAlmost every patent search tool now uses embeddings in some form. Embeddings are a broad category. Most tools use PatentBERT, Sentence-BERT, or similar off-the-shelf transformer models. Some may be doing fine-tuning on top of that. These are applied at the sentence or claims level.

The pros/cons of BERT and BM25 are well-researched and it is common to combine both approaches. BERT, even after patent-specific fine-tuning (as in PatentBERT), still struggles to make a meaningful contribution to information retrieval tasks. BERT wasn’t designed for the kind of long-context understanding that patents require.

Amplified has focused on building patent-specific embeddings for long-context. So we want to be clear that Amplified’s embeddings are very different. To our knowledge, no one else is doing this. If you are, we’d love to talk and are happy to include you in an update to this report. Amplified’s embeddings are trained on the entire global patent corpus, representing full patent documents, and purpose-built for retrieval.

That is why Amplified’s embeddings outperform every other available component, finding art that nothing else surfaces, and ranking it on average 2–6× higher. Building a genuinely new embedding that outperforms alternatives is a major undertaking — years of work on architecture, training data, and patent-specific optimization. While this is a major contribution on its own, the key insight is that this is a different information retrieval approach from existing methods. On its own you get better results, faster. But the real achievement is combining it with other techniques to raise the ceiling on automated recall. In other words, this is a critical missing building block from the modern patent search toolkit and having it fundamentally changes what an intelligent system is capable of.

While most other vendors are now working more on the reasoning layer, there are a few others working on the retrieval problem and we want to acknowledge their contribution as well. IPRally uses a knowledge graph approach that encodes patent relationships structurally — a genuine advance over keyword search. We haven’t benchmarked them directly and would welcome the comparison.

What a real system looks likeA good system understands the tools available to it and has domain knowledge about how to use them. It combines strong search components that each cover different parts of the retrieval space and uses LLM-driven reasoning to orchestrate them intelligently: deciding what to search, how to combine results, what to look at more closely, and when to iterate.

The recall ceiling for that kind of system is extremely high. Our data shows the combined ceiling across all components exceeds what even expert human searchers reliably achieve. A fully automated system that reaches 87%+ recall on a diverse dataset including both examiner citations and adversarially vetted prior art is already performing at or above expert human level. With the addition of rerankers, other retrieval techniques, and a human-in-the-loop feedback system we expect to raise this ceiling even higher.

Building on thisWe’ve been working on solving prior art search for nearly a decade by building the foundational components needed and understanding how they fit together. Our full-text embeddings raise the ceiling, improve ranking, and find uniquely different art from other approaches. This was a big missing piece that makes combination viable. In the right system architecture, we’re on the verge of truly solving prior art search.

We think that’s transformational for the patent system. Not only will it make prior art a tiny fraction of today’s cost — it will also make it more complete and more comprehensive than what any individual searcher can achieve.

Most companies building AI patent tools today are focused on the reasoning and UX layer using off-the-shelf embeddings or BM25 for retrieval. They are investing their energy in what happens after results come back. That’s understandable and valuable. But it means they’re building on a foundation with a low ceiling.

Pushing the boundaries of patent retrieval forward changes what’s possible. We’ve decided to open up our embeddings and structured patent data so others can build on better foundations. We have an API and MCP server in closed beta right now. If you’re building AI tools for patents — whether you’re in-house counsel with an internal tooling team, or a vendor building products — we want to work with you. The future of AI in patent work isn’t a monolithic platform, it’s a community effort to raise the bar on patent work.

Interested in building on better foundations? Get in touch with us at info@amplified.ai.